Compress Prose to AXL v3

Deterministic compression into structured packets. No LLM needed. Fewer tokens, lower cost.

Free, forever. Apache 2.0, community-stewarded by AXL Protocol. Sponsors keep the lights on and unlock unlimited usage - nobody pays to use the protocol.

Recent Compressions

No compressions yet

How It Works

Paste Text

Drop in any English prose - docs, articles, instructions, transcripts.

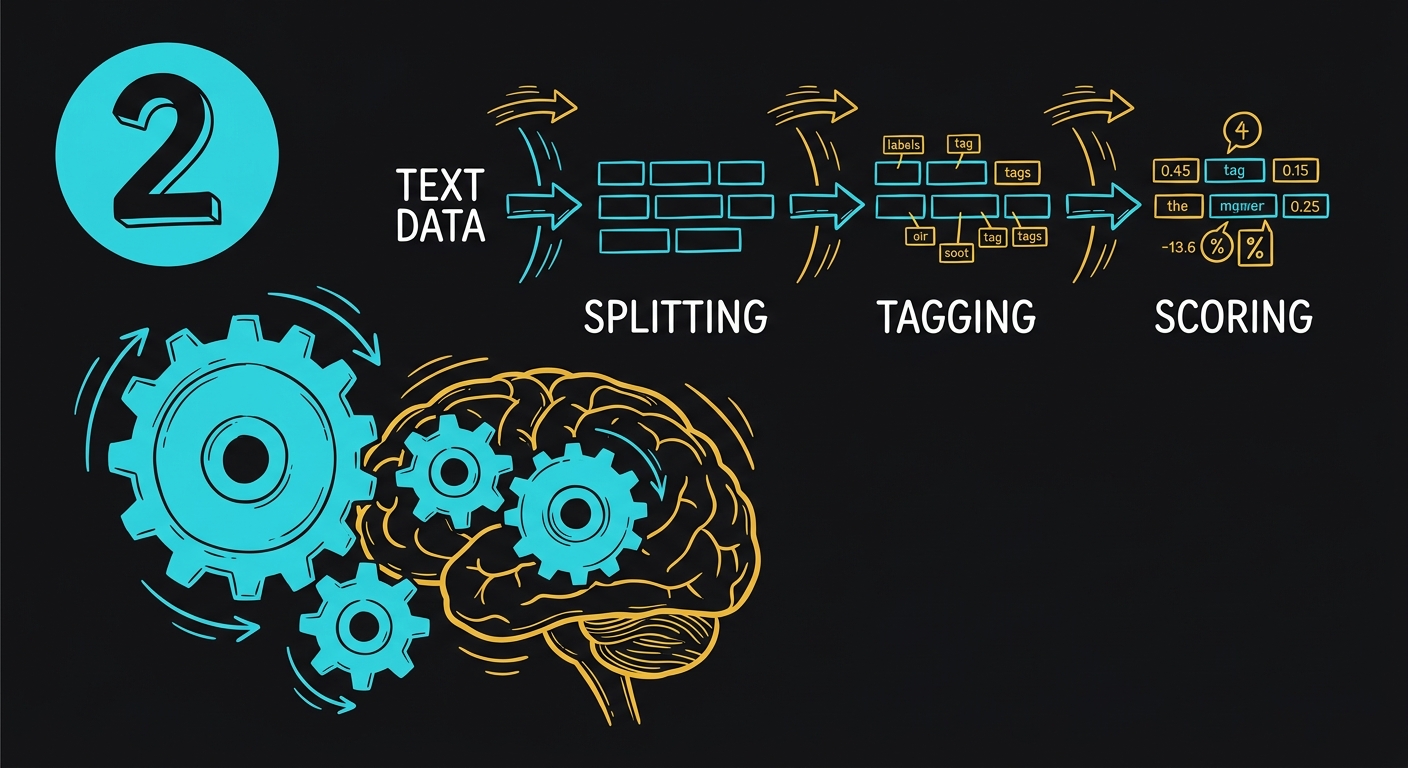

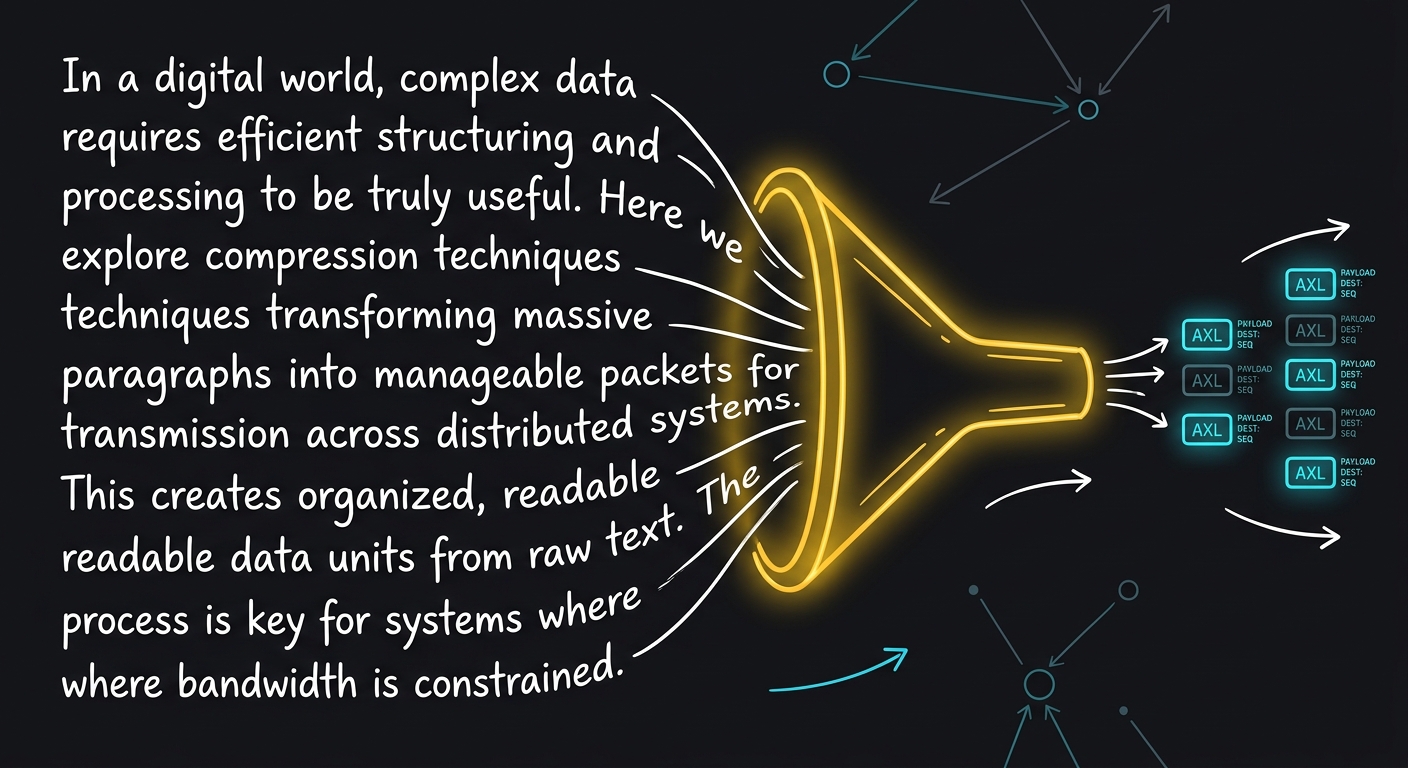

Compress

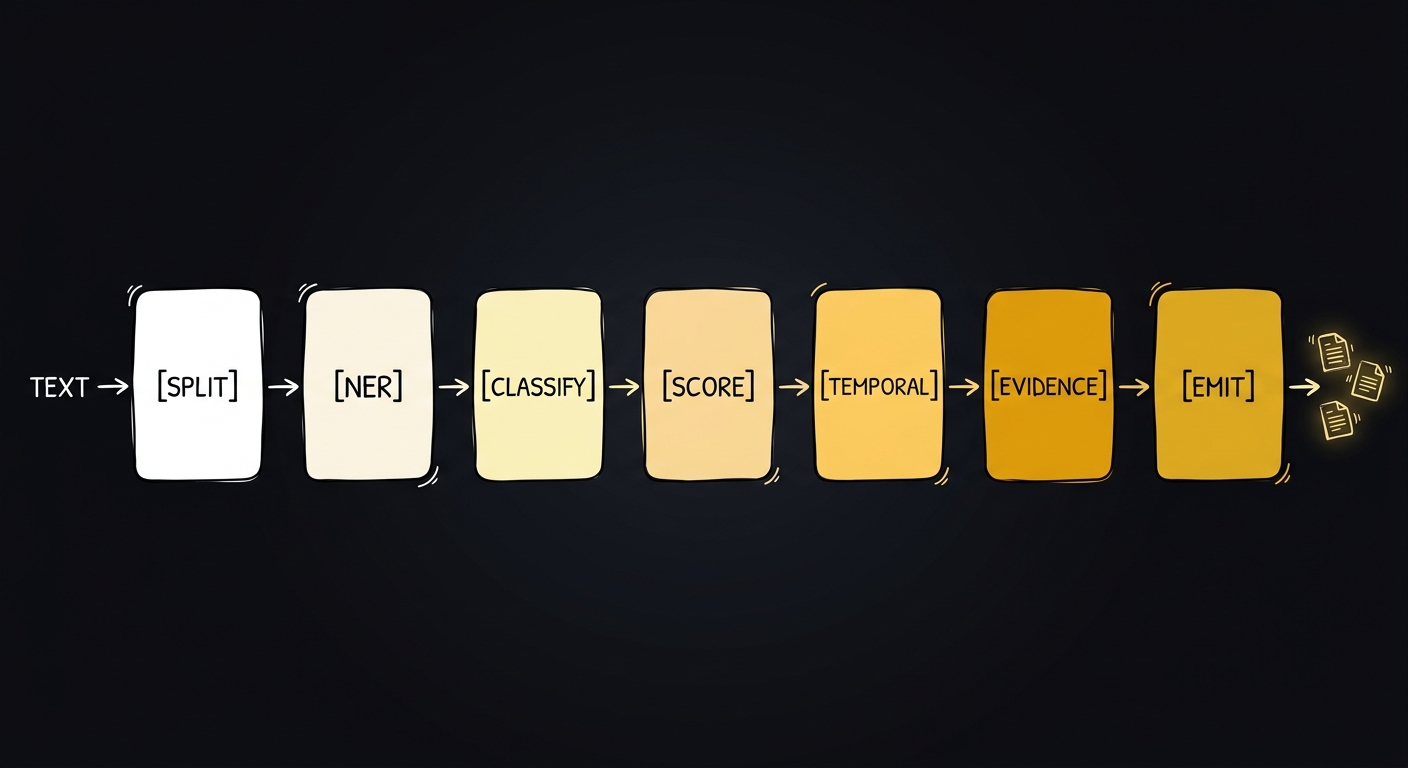

Deterministic NLP pipeline parses your text into structured AXL v3 packets. No LLM.

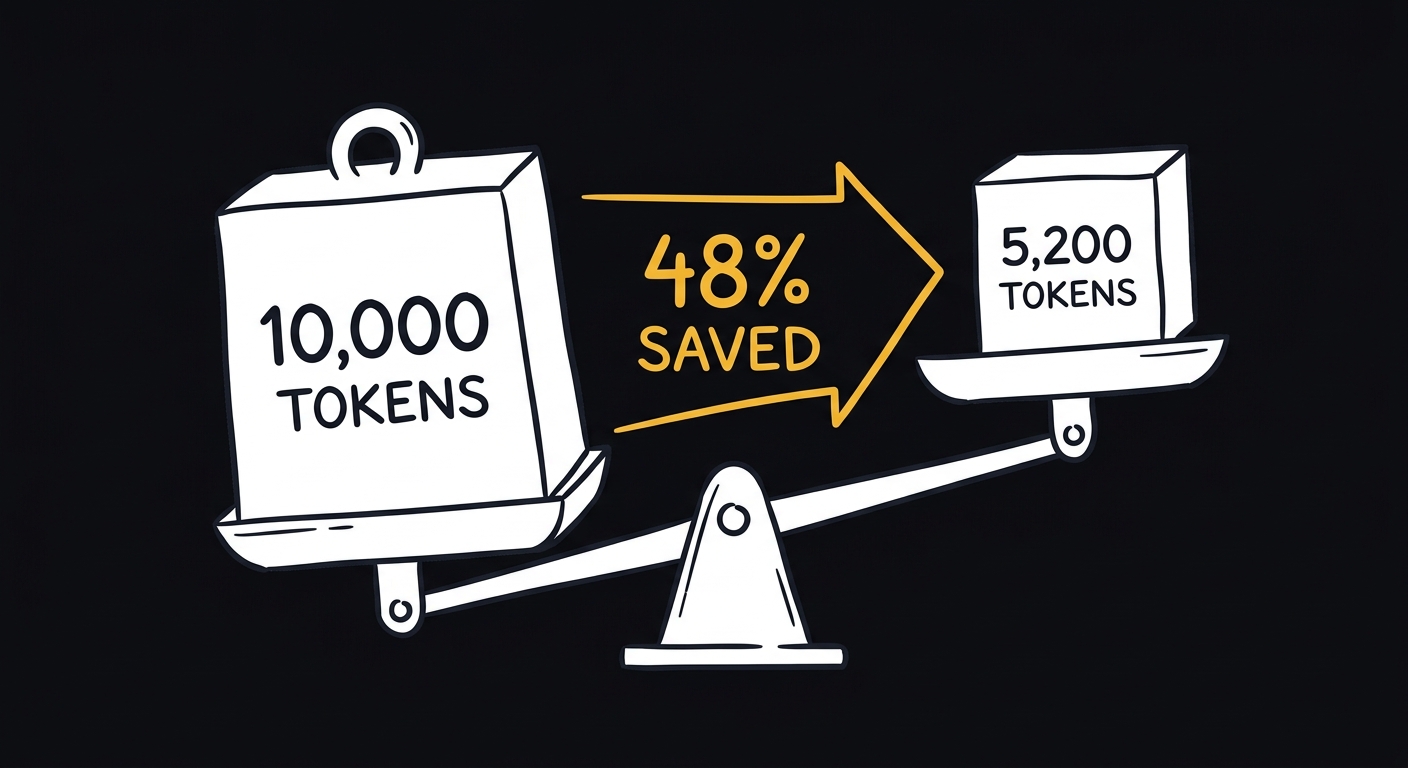

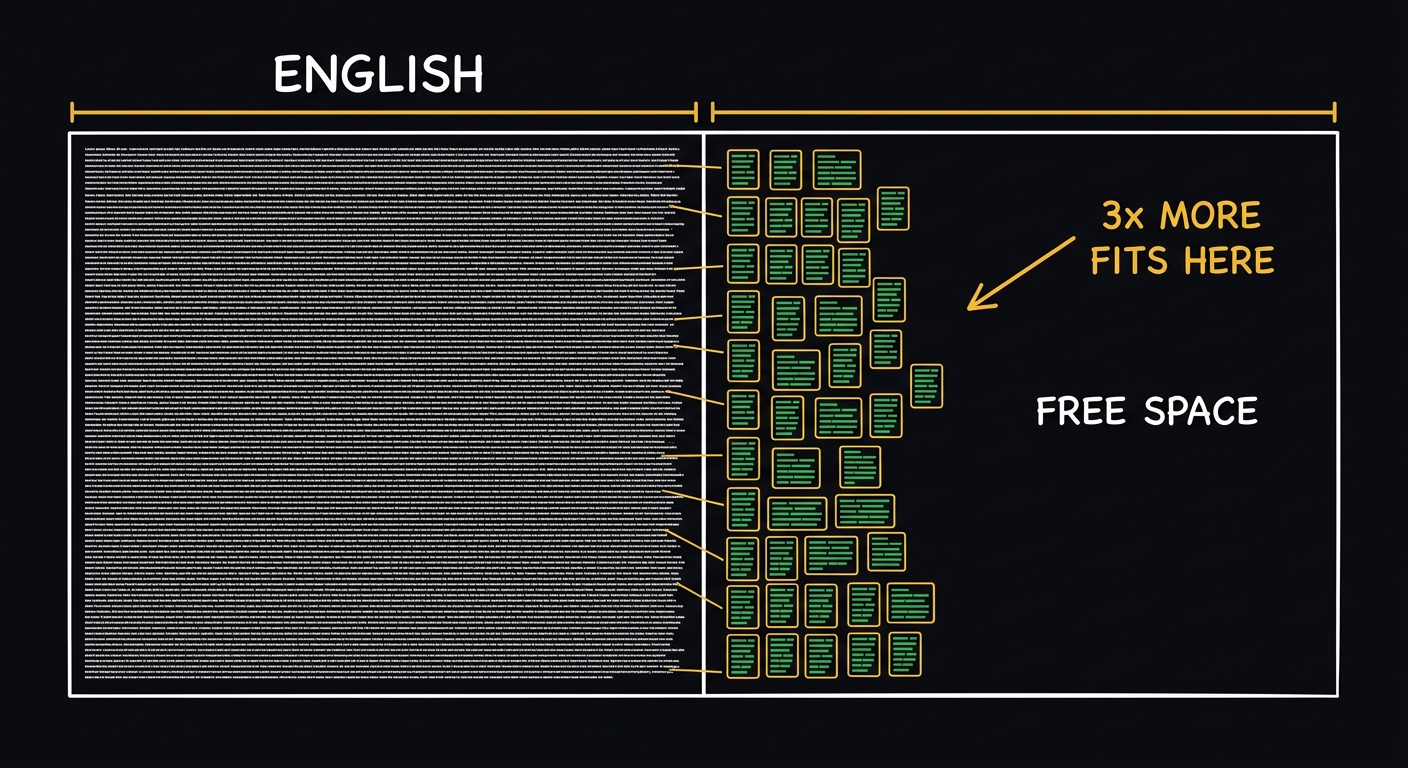

Save Tokens

Fewer tokens = lower API costs. Deterministic, reversible, round-trip proven.

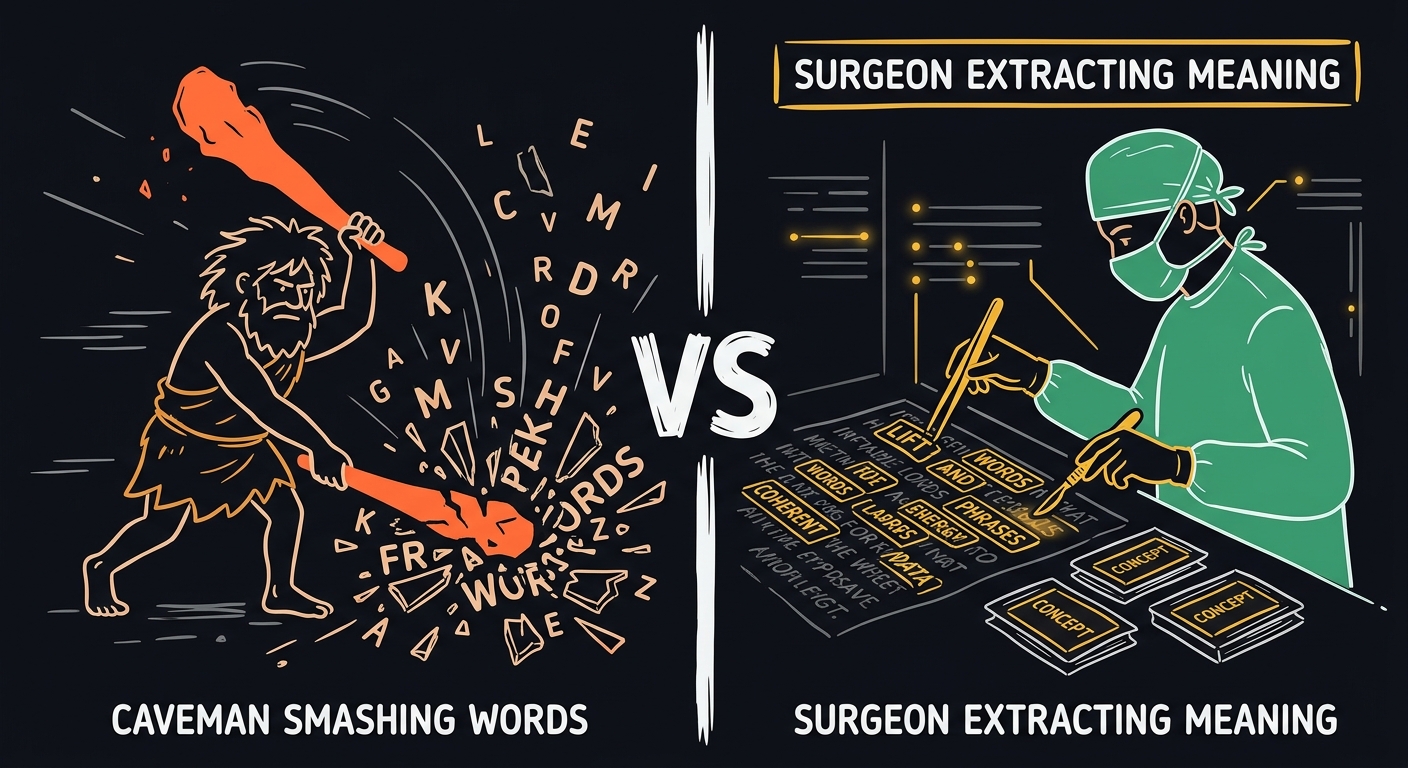

Why Not Just Strip Tokens?

No structure. No types. No confidence scores.

No operation codes. No evidence chains.

Cannot decompress back to prose.

| Ratio | |

| Structure | None |

| Round-trip | No |

| Types | No |

| Metrics | No |

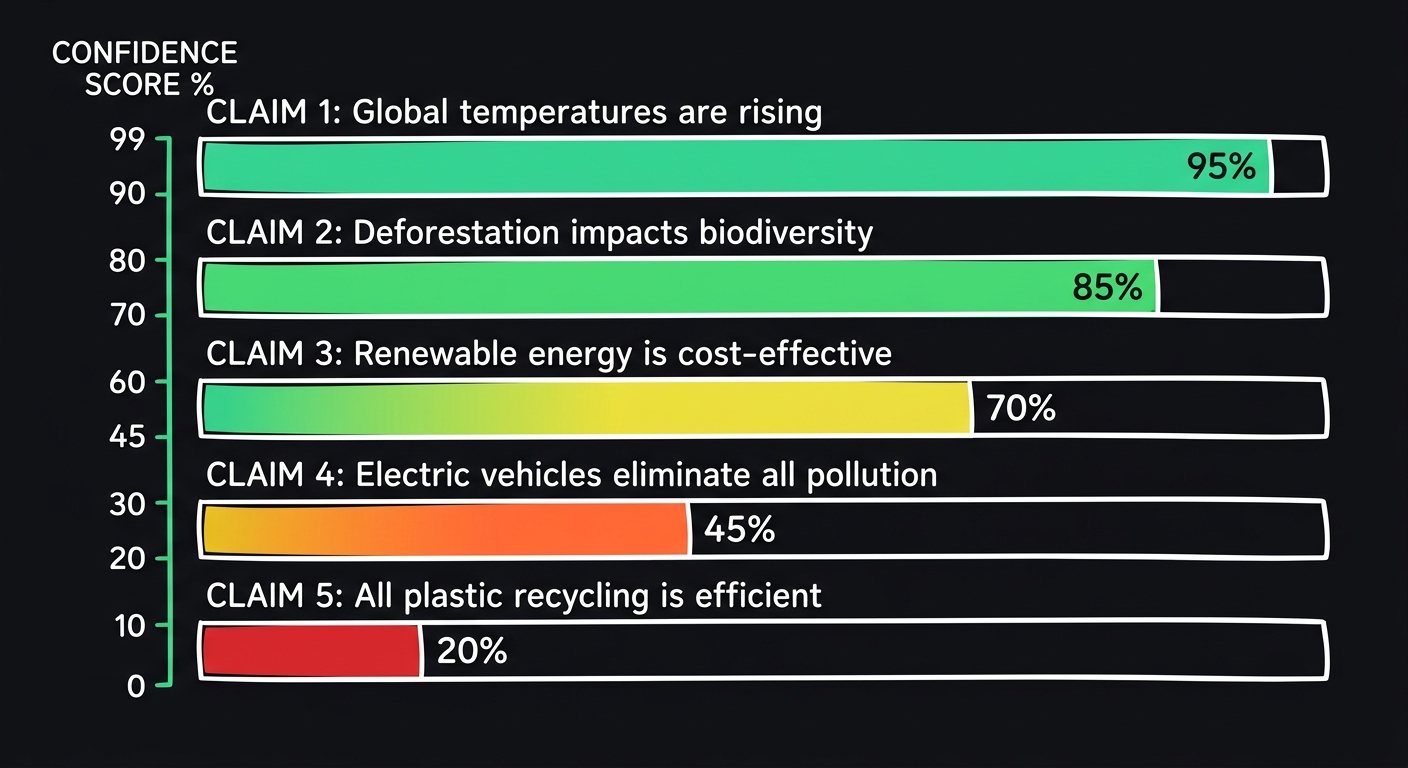

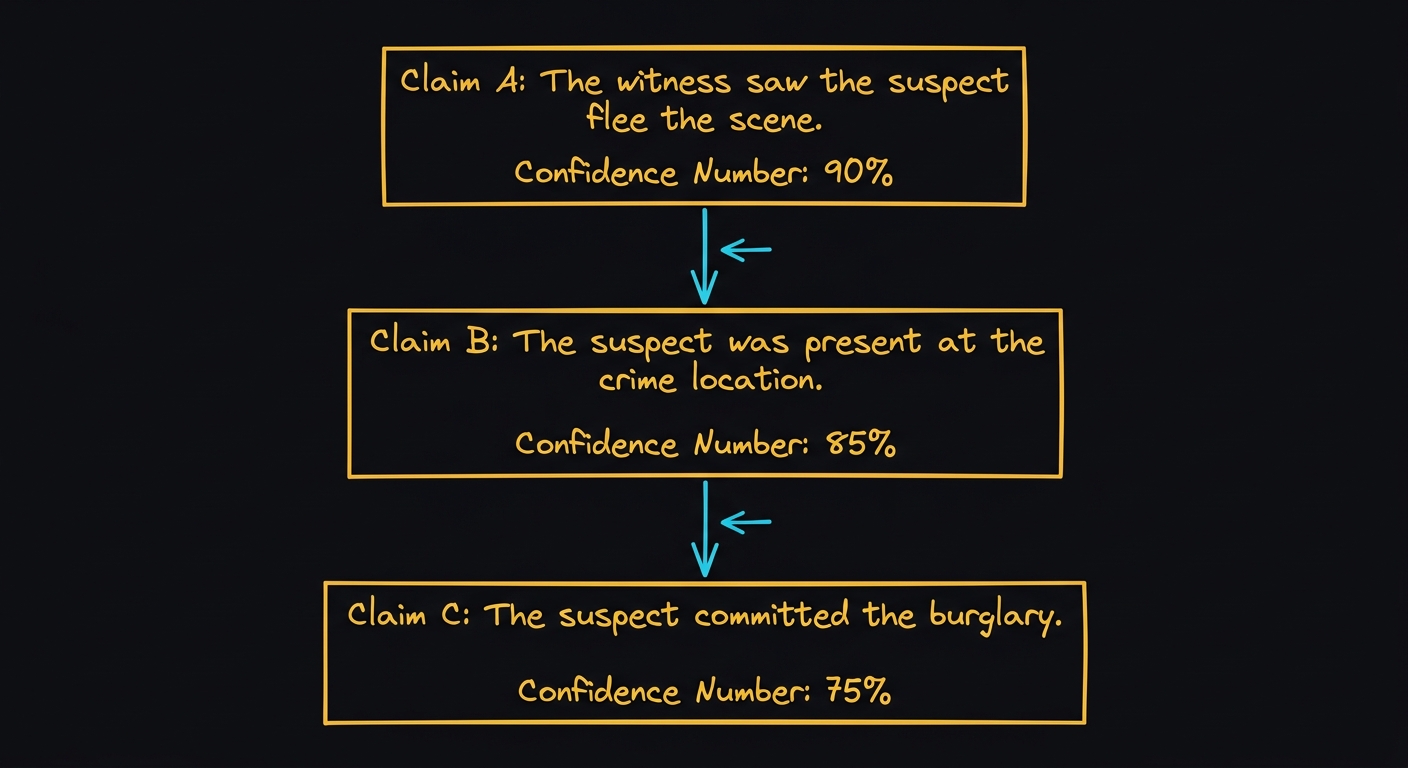

Typed subjects. Confidence scores. Evidence chains.

7 operations. 6 tag types. Machine-parseable.

Decompresses back to full prose.

| Ratio | 1.96x |

| Structure | Compositional grammar |

| Round-trip | Yes |

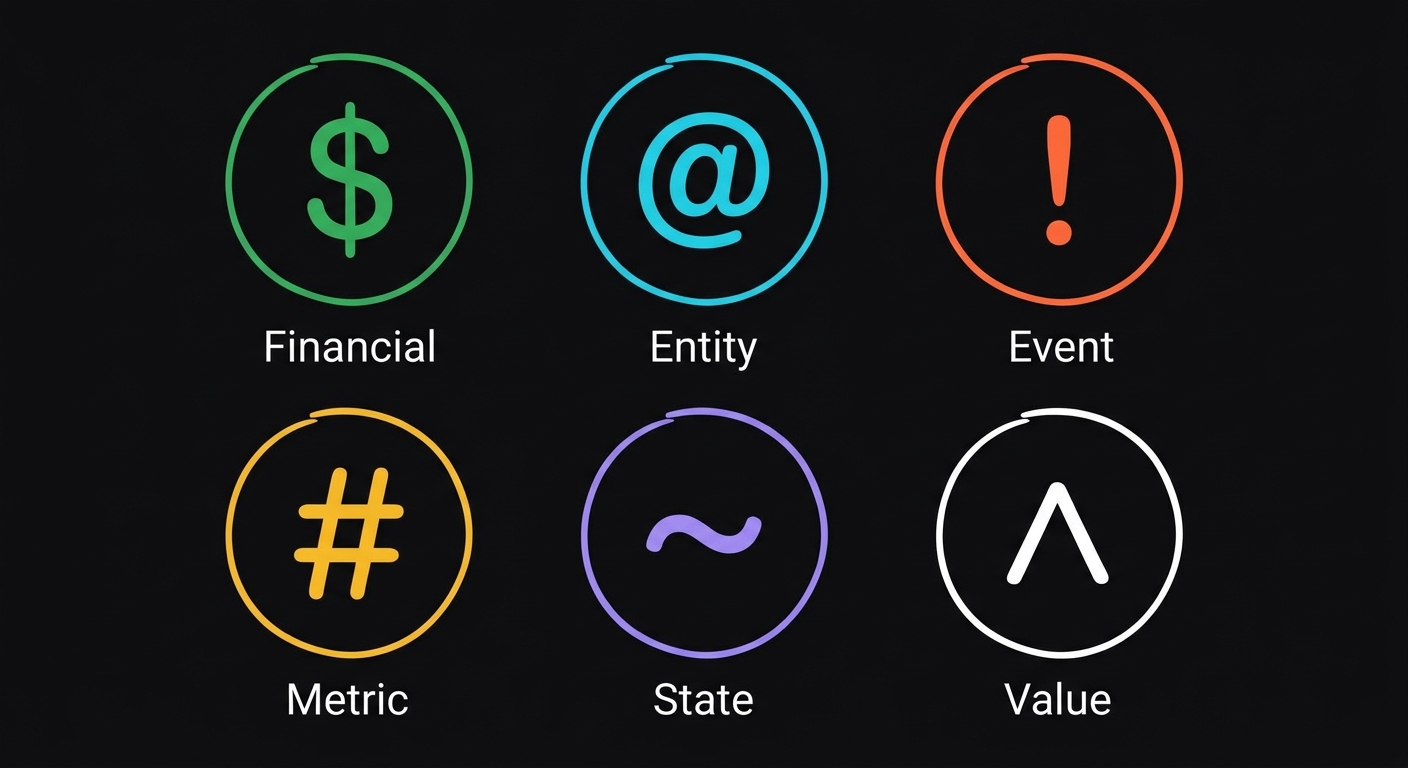

| Types | $ @ # ! ~ ^ |

| Metrics | Full (ratio, packets, saved) |

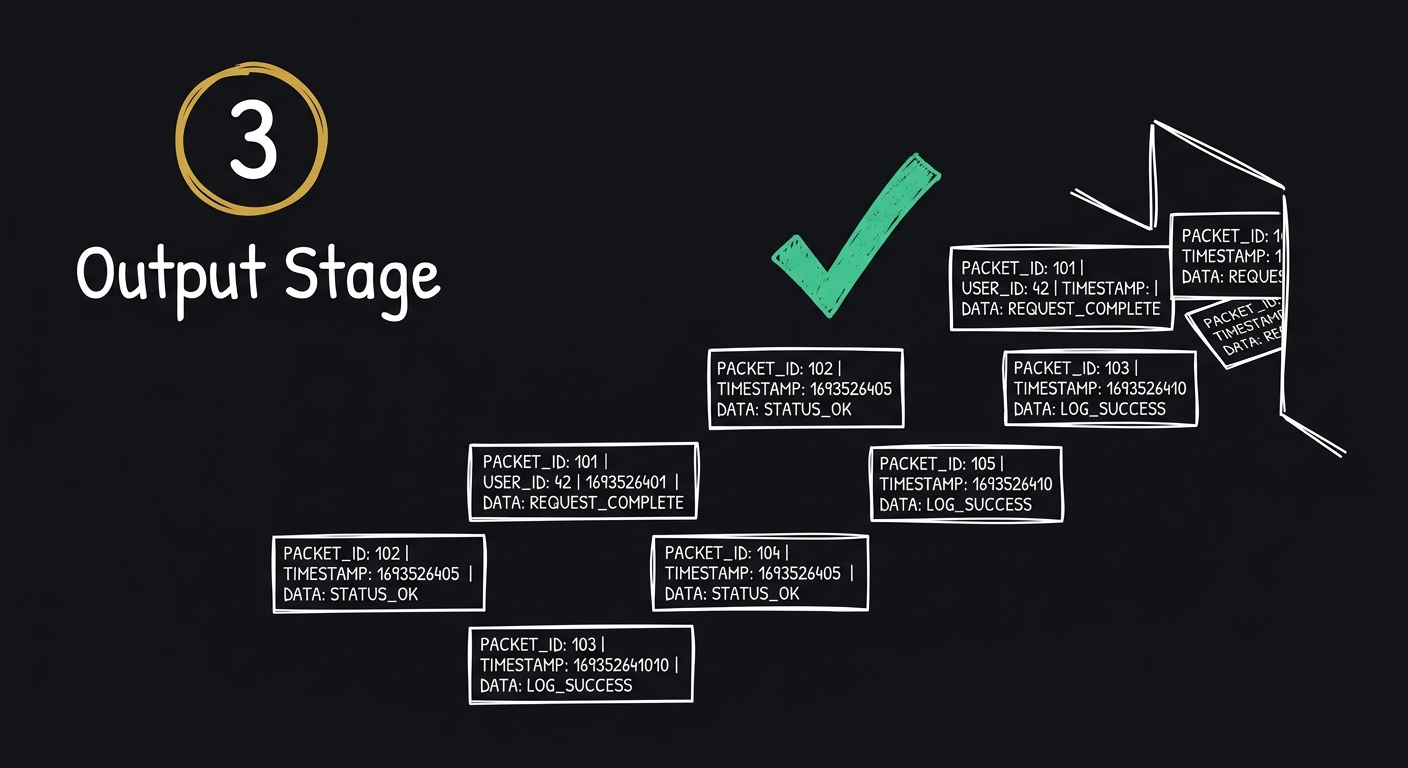

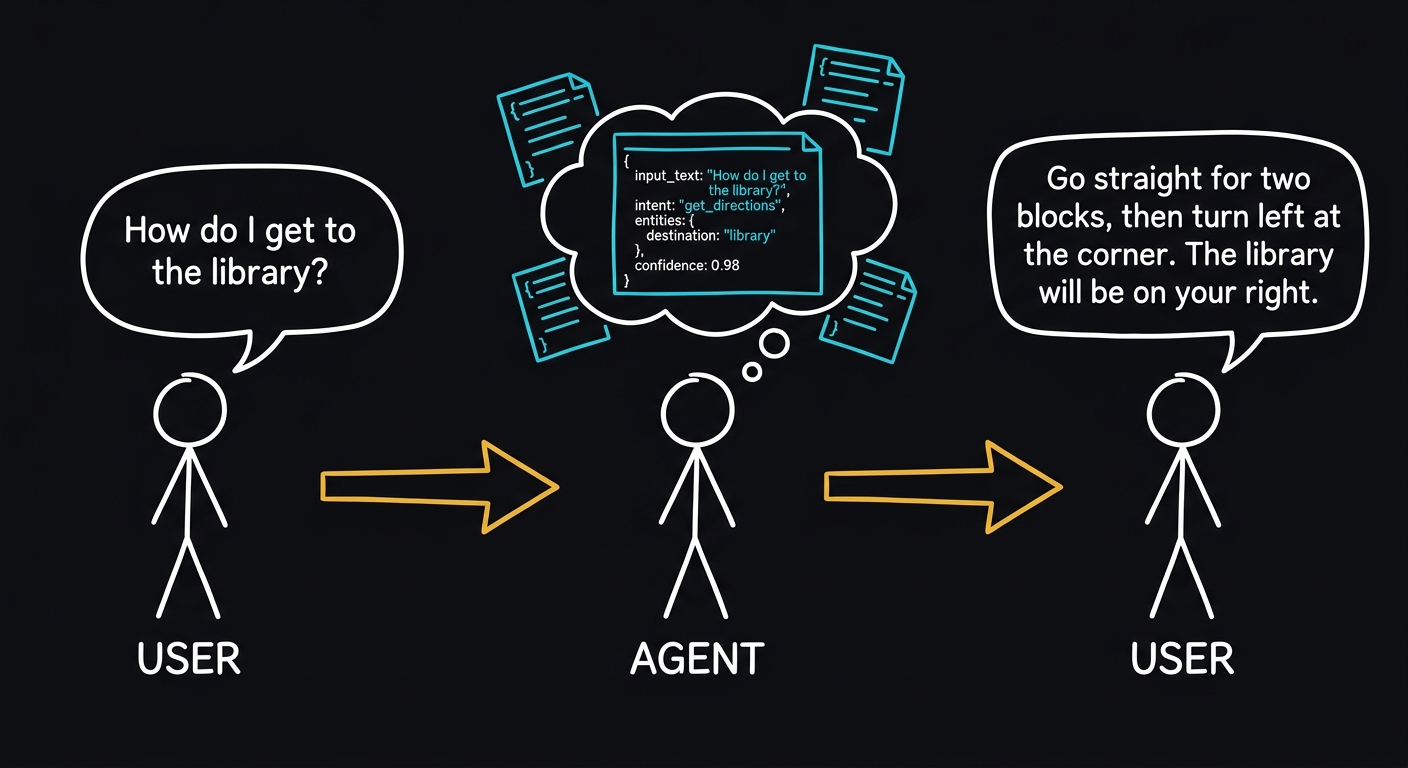

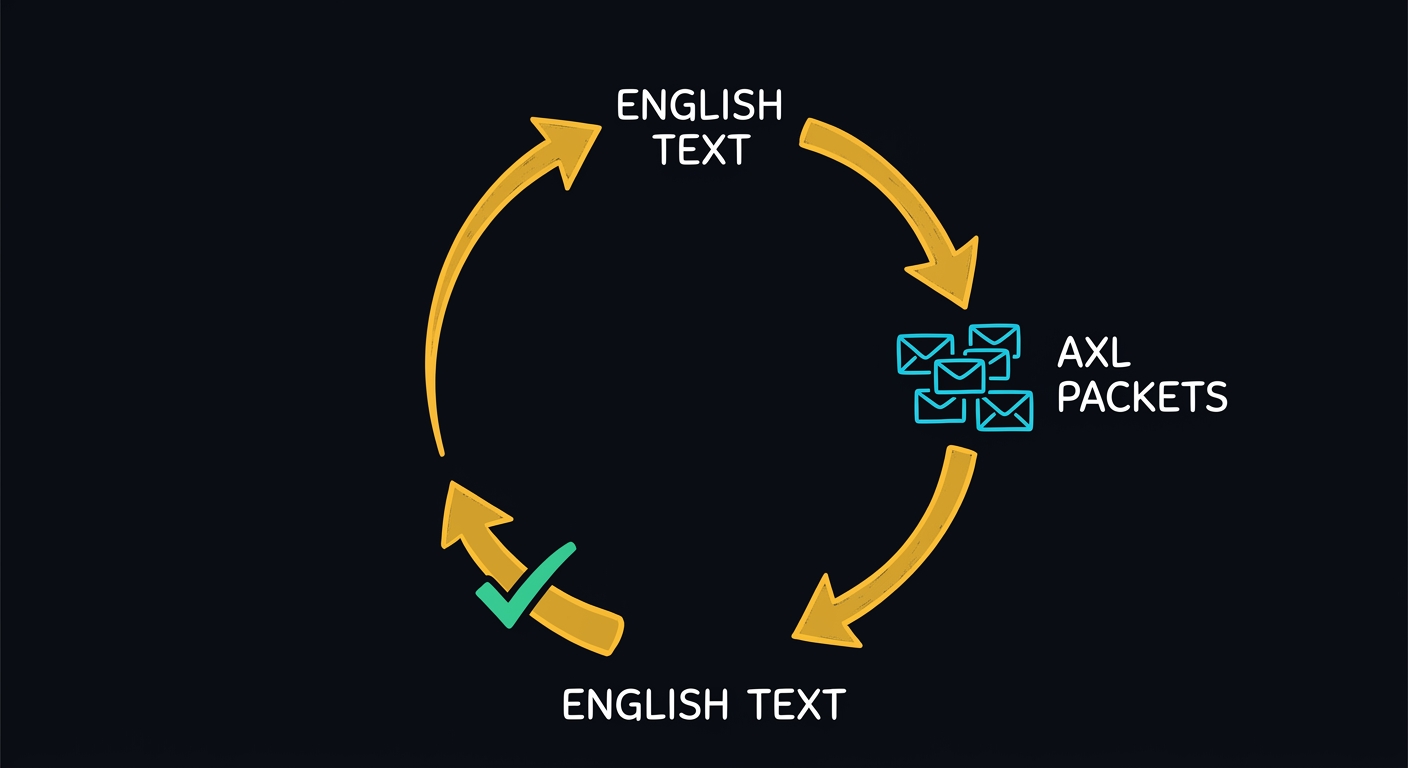

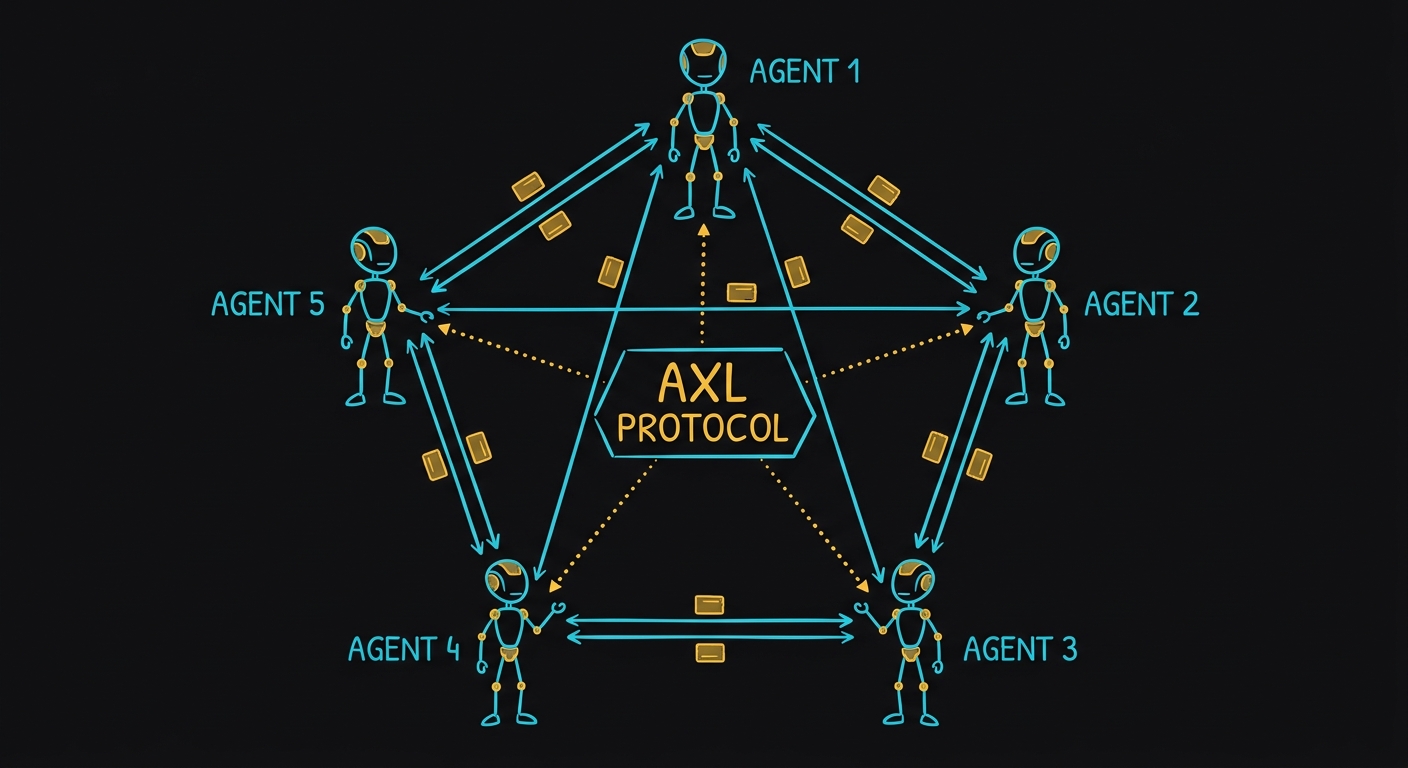

Full AXL Chat Pipeline

Sign in for the 3-step chat experience. Your AI thinks in compressed AXL. You chat in English. Every message runs through the compress-reason-decompress pipeline, saving tokens on every exchange.

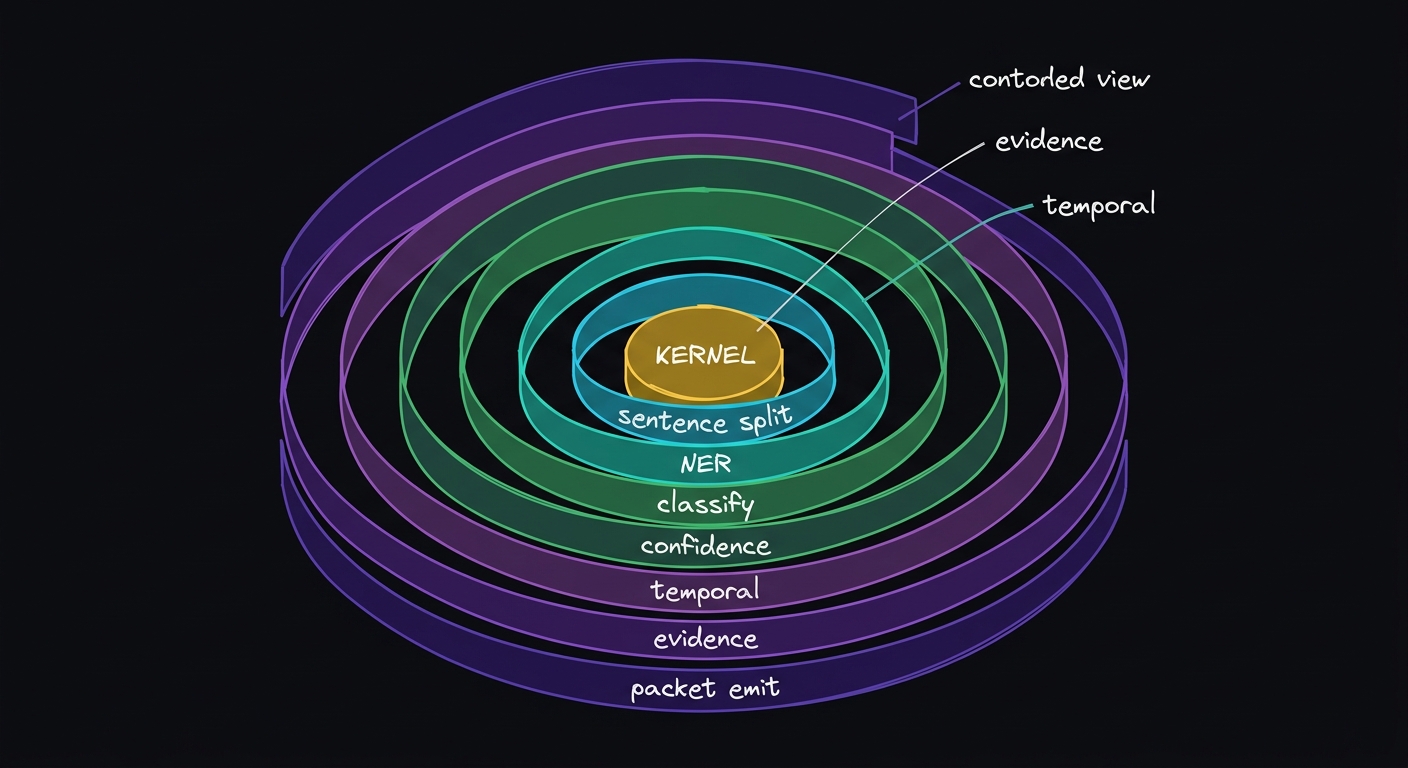

Architecture and Internals

Rosetta v3 Kernel

The Rosetta is a 75-line specification that defines the AXL v3 packet grammar. It is fetched once from axlprotocol.org/v3 and cached in browser memory. When you press Compress, it becomes the system prompt for the LLM call.

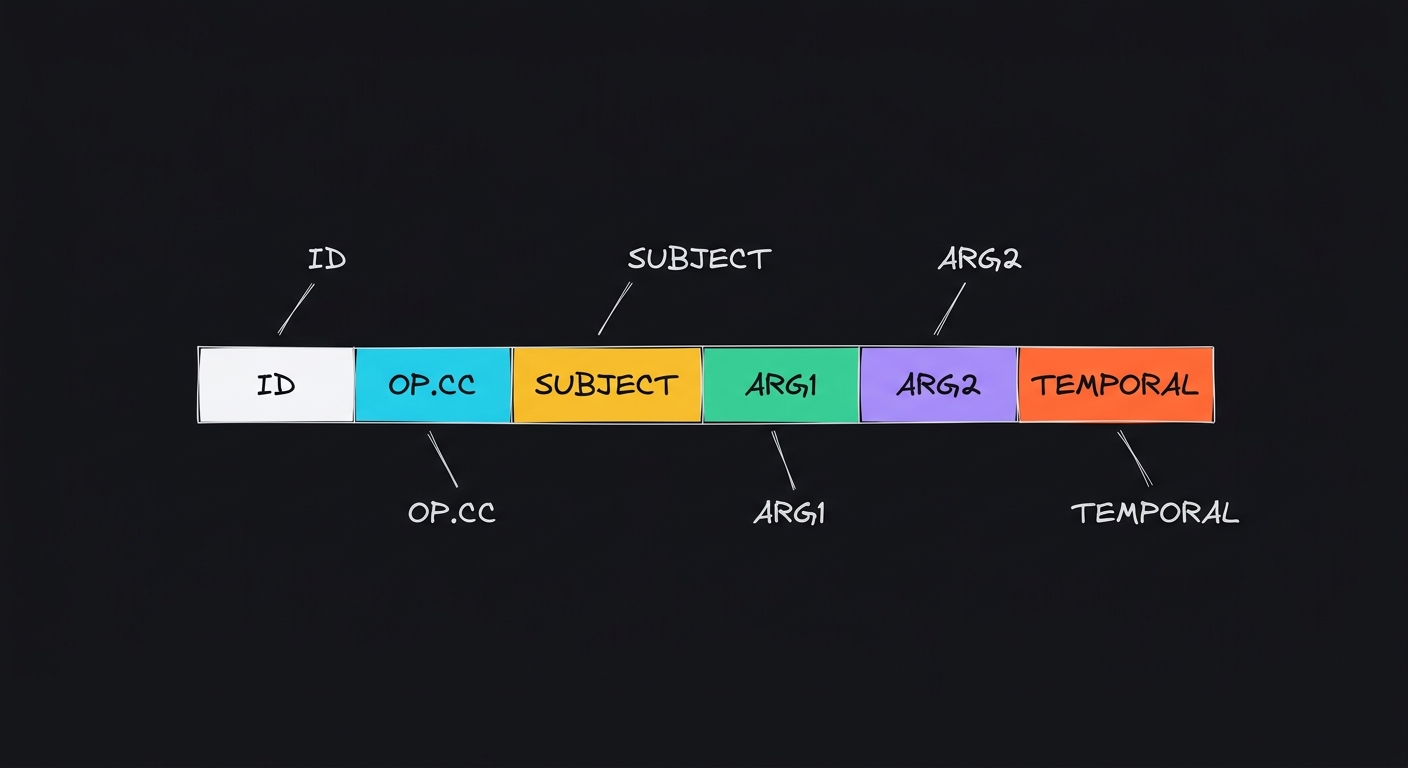

Packet Format

Every AXL v3 packet follows this structure:

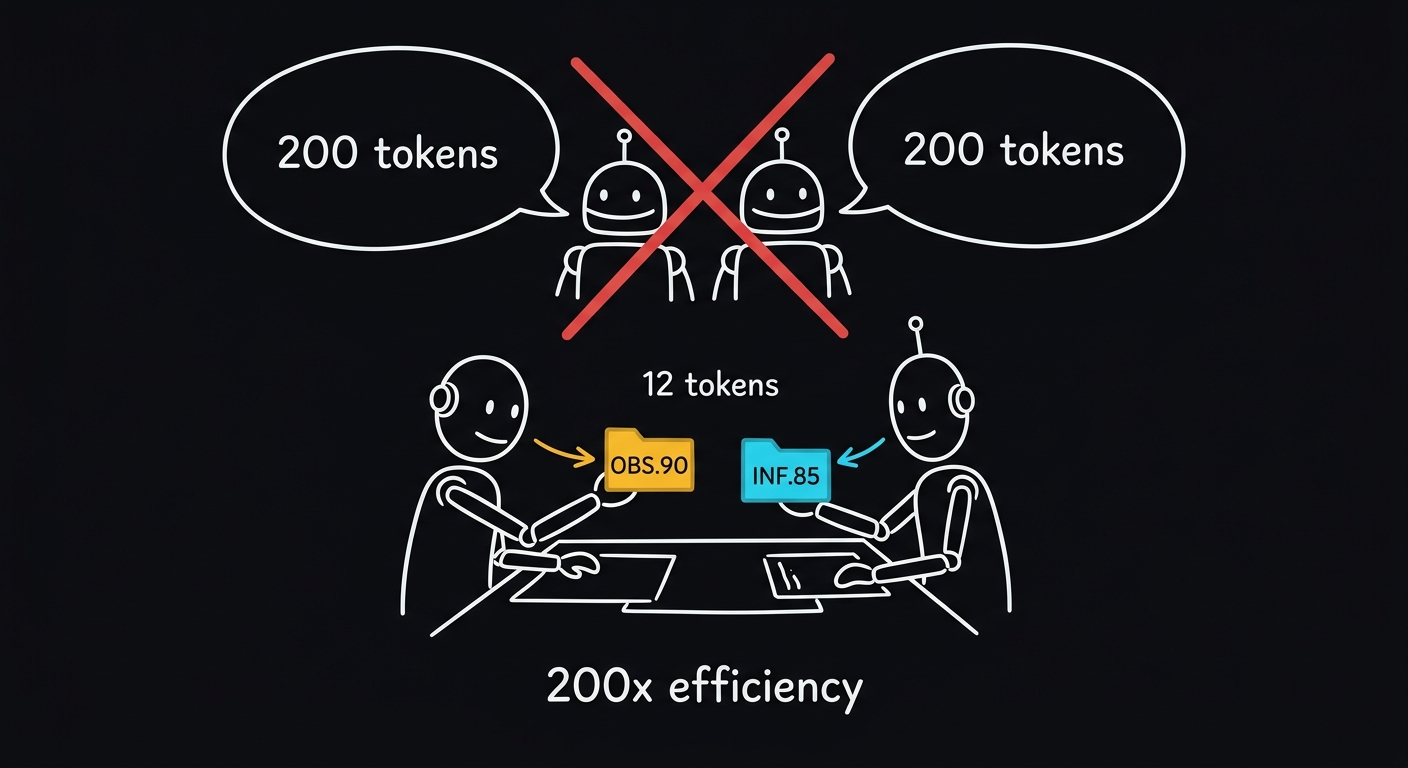

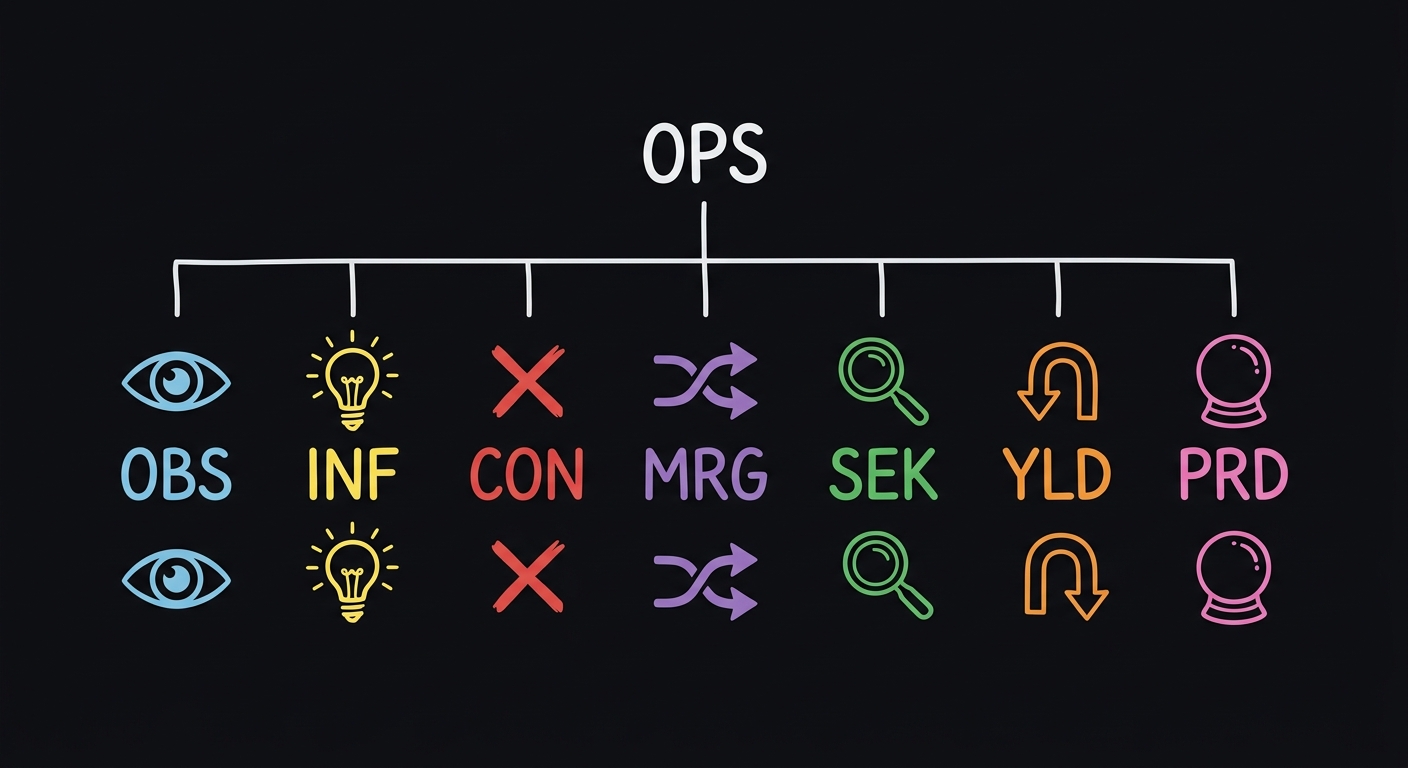

Operations: OBS (observation), INF (inference), CON (contradiction), MRG (merge/synthesis), SEK (knowledge gap), YLD (yield/update), PRD (prediction).

Subject Tags

Evidence and References

API Key Security

Your API key is stored only in your browser tab's memory. It is sent directly to the LLM provider (Anthropic or OpenAI) via HTTPS. Our server never sees, stores, or proxies your key for this tool. The registered Chat pipeline stores keys server-side for the 3-step compress/reason/decompress flow.

Decompression

To decompress AXL packets back to English: parse each packet by operation type, extract the tagged subjects and predicates, reconstruct claims in natural language, then merge into coherent prose following the MRG packets as structural guides.